“Privacy and Personalization in Voice Apps” is the title of your session at VoiceCon. You address the user’s needs for personalization and expectations for anonymity and data protection. Can you describe this area of conflict a bit in more detail?

The concept of privacy is rooted in various personal, cultural, and legal ideologies.

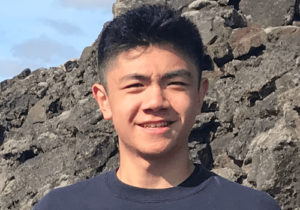

Jeremy Wilken: Privacy is defined in the dictionary as ‘freedom from unauthorized intrusion’, but this leaves much flexibility for each person to define the term individually. What is an unauthorized intrusion, and would we all agree? Essentially, the concept of privacy is rooted in various personal, cultural, and legal ideologies. It’s also quite emotional, particularly when privacy is violated.

We often think about a business’ responsibility to protect user data, but what about the user’s desire to be in control of what is shared, how it is used, and how it impacts their experience? We have a responsibility to design products and services that give people more power over their choices.

You will outline some guidelines to reconcile the user’s personalization needs with anonymity and privacy expectations. Could you give an example of such a guideline?

Jeremy Wilken: Let’s start with a simple example. When you go to a new restaurant for the first time, you likely don’t know what is on the menu and the staff doesn’t know anything about your preferences. If a waiter starts to talk with you about the menu and you ask for a suggestion, you would expect that waiter to have no information and thus ask you additional questions to narrow down some choices.

However, in the digital sphere our lives are far more connected, and it’s likely that even if you are new to using a service that it could easily infer or lookup information about you. If we digitize our menu suggestion example, a mobile app with a ‘personal waiter’ could easily infer (or potentially know through data practices) what you’ve had recently and suggest options based on your recent meal choices, even if this was a first time experience. This is similar to how advertising works, and why you typically get related ads or content based on your recent history.

One problem here is we abuse or break the social expectations of how people interactions work, which erodes our sense of privacy and leads to people slowly accepting overbearing personalization techniques that target individuals even without any previous history of interaction with a given service. To that end, a guideline would be to model your engagements with users more carefully to mimic the way that relationships are built between humans.

Ignoring the questions around data privacy for a moment, let’s say a service has sufficient knowledge about a user to make accurate meal suggestions. A service can still use opportunities to engage with the user in a way that allows the user to opt into providing personalization information, so it is more clear to the user how their data is working and the system also gets more accurate details as well. I think this is often ignored because the competition is fierce and services are afraid they won’t have another chance to make a sale, so they go in strong and overstep a user’s right to privacy and anonymity.

Learn more about Voice Conference:

Would you say that today’s language assistants take privacy sufficiently seriously?

The design of today’s assistants requires putting a lot of personal data into the system.

Jeremy Wilken: This depends on your definition of privacy. The big players today have a centralized service that requires an active internet connection, and thus depends on a lot of data being shared. A lot of people struggle to understand how the technology works, and while there are controls over how data is collected and used, for the system to work fully you will have to enable most settings.

The design of today’s assistants requires putting a lot of personal data into the system. There are some companies exploring alternative system designs that try to protect privacy, and I hope they will find meaningful patterns that can be brought to all systems.

What are the biggest challenges in developing a Voice App?

Jeremy Wilken: The biggest challenge is design and being able to fully consider the entire scope of the experience for the user. There is a lot to consider and most of us are unprepared for the complexities. Voice experiences can be surprisingly emotional, especially when things go wrong.

Voice experience failures often can be perceived as blamed as the fault of the user, such as when a system says things like “I don’t understand you”. This is an example of poor design that potentially drives users away from the voice app and voice technologies in general. Also beyond the current core experiences, like music, weather, general knowledge questions, etc., voice experiences will often require additional hardware integrations to be truly useful in your physical space, and we’ve got a lot to do to make those integrations safe, secure, and affordable.

How do you see voice in general? Is it a niche field? Or are we on the brink of a revolution in the interaction with technology?

Jeremy Wilken: I believe that voice experiences will become an essential aspect of our daily lives. However, there are a lot of challenges to overcome, namely hardware limitations, service fragmentation, contextual awareness, and user trust and privacy. For most use cases, I think voice is more like a computer keyboard where it enables input and communication, but it doesn’t stand alone.

I think the value of voice is actually based on the ability for computers to be able to understand people’s vocalized thoughts and react, rather than computers being great conversational partners. We have to ensure that we focus on building value and increasing quality of life, and not just chasing technology for its own sake.

What is the core message of your session at VoiceCon?

Jeremy Wilken: Privacy has to be built into the foundations of our services and experiences. Designers can help in many ways to bring privacy to the front of the product design and development in a number of practical ways like adding privacy into user stories, documenting data requirements for features, and designing experiences that employ concepts like progressive disclosure. If we don’t bring privacy to the forefront of our development and design of voice experiences, we’ll have done a major disservice to all.

Thank you very much!